I’ve wanted to make Markov chain generated sentences based on this blog for a long while. Wednesday night, I did. It took me under an hour! Why have I avoided it thinking it’d be difficult? Probably I thought it’d take a long time and would be lame.

NOT SO

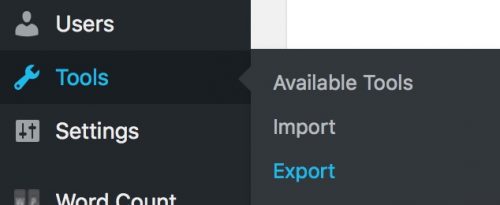

First, I exported the contents of my blog: Tools > Export

Out of that, you get a complete dump of your site’s content. This is a WordPress eXtended RSS file. What we care about are just two things from each post: the title and the content. To turn this XML into text I wrote a bit of PHP. It parses the <item> elements and then does a bit of string manipulation to remove all the HTML tags. It then dumps it into a file called “artlung.corpus.txt”.

<?php

$xmlstr = file_get_contents("artlung.wordpress.2018-02-22.xml");

$outputfilename = "artlung.corpus.txt";

$blogcontent = new SimpleXMLElement($xmlstr);

$all_text = array();

$i = 0;

foreach ($blogcontent->channel->item as $item) {

$content = $item->children('content', true);

$content = str_replace('>', "> ", $content);

$content = str_replace('\n', " ", $content);

$all_text[] = (strip_tags($item->children('content', true)));

$all_text[] = ((string)$item->title);

}

$str = implode("\n", $all_text);

file_put_contents($outputfilename, $all_text);

?>

(I ran this locally on my Mac since I have PHP installed. Did you know you can run a one-line PHP server? Just run php -S localhost:8000 and you have a local web server! I use it all the time. Read about it here.

Then I talked to my friend Kelly and asked her about what library I might use to accomplish this task. She pointed me to NLP (Natural Language Processing) libraries. I did a bit of googling and found Markovify: “a simple, extensible Markov chain generator.” It seems very well documented and the README on GitHub had easy install instructions. I already had python and pip installed and a terminal session with sudo pip install markovify did the trick.

Once I did that, I created a file “artlungmarkovify.py” and put this in it:

import markovify

# Get raw text as string.

with open("artlung.corpus.txt") as f:

text = f.read()

# Build the model.

text_model = markovify.Text(text)

# Print five randomly-generated sentences

for i in range(10):

print(text_model.make_sentence())

# Print three randomly-generated sentences of no more than 140 characters

for i in range(3):

print(text_model.make_short_sentence(140))

Then I ran python artlungmakovify.py in terminal and BOOM! There’s some not-quite-Joe-Crawford blog sentences (every other sentence italicized to help distinguish the lines):

I expect she’ll be blogging about the interests of governance vs. conservative media in David Frum: Waterloo: I’ve been working at Yahoo has.

Still amazed we made together.

Nobody cares if you know it.

Immersed in his last name while he asked me that if life were these: Joe Crawford | Joe in LeahPeah’s GlassesNice picture, eh?world, meet olivia rex.

When I tend to ramble a bit, pulled nonnative plants, planted native ones.

Also, my feet and went with following the instructions on the NYTimes website Ted Koppel grills FEMA head Mike Brown.

Perhaps in expiating these exhibits we’ll actually find that when in 2004 and 2005.

Roth had a birthday card weighing one pound.

I then loaded up the file into BBEdit and did some cleanup. I removed duplicate lines, removed things like URLs. Sorted all the individual lines. I used BBEdit’s Markup > Utilities > Convert HTML to Text to undo the HTML entities that were there. I can see that a clean set of sentences are important for getting a good Markov result.

Now I have it generating lines like:

I think of the world of connections: Online social networks are powerful.

I left them in town.

This tension and disquietude sometimes spiral out of steak, chicken, pasta, and pretty much Nazis and haven’t caught on that beach, and the like.

People all over it.

I checked in at the cover here.

I don’t really care for that hair under your belt.

Last Friday, just 3 days to come.

Remembrances all over the weekend.

I’ve been blogging since before I was not particularly interested, but she generally agreed.

But it feels honest to do that, and I’m looking forward to with delight, fear, wonderment.

I’m going to tap my phones and tablets and e-readers shows us that it’s like I’m going to have never met her.

101 in 16 years for a logo, and right there for the stage.

As it is to say, the whole family is way out at subway stops.

My Grandfather retired and they don’t have an original design vinyl giant robot.

I’m doing lots of orange groves back here.

Clever folks will realize you can get to the rock line.

It’s been a few dozen people in this house in which he invited every passerby to lie in the surf, another from yesterday.

The fact that my increased notoriety brings more candy, I expect we won’t get to do serious work.

It will cost $1743 to fix the toilet which was leaking.I have posted in the meantime.A few weeks ago at an unexpected angle – young, white, female and with each player serving in turn.

Yes, San Diego Blog Meetup — Calling all Web Designers in San Diego very dearly.

Who else gets cool Swedish web developers take the train?

But the show must go to the car windows unrolled when the receiver to participate in a much less here.

It was a contest that year to year quickly.

If I finish a silly Flash game I want one of those old images.

It strikes me as a teen, working in Hollywood.

I love this. It’s entertaining silliness.

Now, I sent a few sentences to Leah and she said “it sounds a bit too much like President Trump”–which admittedly made me rather sad. But I can see that with a good corpus some rather interesting things might come out of it. My friend Kelly on reading some results said “It’s very interesting. Like a semantic word cloud.”

It really feels like this century will have more things like this. At some point computers will so good at mimicking us that we won’t be able to tell the difference. What that means for us as a culture? Hard to say.

four comments so far...

[…] Friday I wrote about how I turned 17 years of blog text into interesting sentences. I’ve also put that up on the web at lab.artlung.com/bloggingbot […]

Neat project. I love a good blog.

Thanks Andrew! I appreciate that!

Spring Cleaning! Oh, well, maybe it’s winter cleaning? Winter Cleaning! I removed the obnoxious google ads from lab.artlung finally. I also removed the site from…